Throughout industries, synthetic intelligence (AI) is optimizing workflows, rising effectivity, driving innovation—and prompting investments in accelerators, deep studying processors, and neural processing models (NPUs). Some organizations are beginning small with retrieval-augmented era (RAG) for inference duties earlier than progressively increasing to accommodate a bigger variety of customers. Enterprises that deal with massive volumes of personal information could choose establishing their very own coaching clusters to get the accuracy that customized fashions constructed on choose information can ship. Whether or not you’re investing in a small AI cluster with a whole bunch of accelerators or an enormous setup with hundreds, you’ll want a scale-out community to attach all of them.

The important thing? Planning for and designing that community correctly. A well-designed community ensures your accelerators hit peak efficiency, full jobs quicker, and preserve tail latency to a minimal. To hurry up job completion, the community wants to forestall congestion or, on the very least, catch it early. The community additionally must deal with site visitors easily, even throughout in-cast situations—in different phrases, it ought to handle congestion promptly as soon as it happens.

That’s the place Information Middle Quantized Congestion Notification (DCQCN) is available in. The idea of DCQCN works optimally when express congestion notification (ECN) and precedence stream management (PFC) are utilized in mixture. ECN reacts early on a per-flow foundation whereas PFC serves as a tough mitigation measure to regulate congestion and forestall packet drops. Our Information Middle Networking Blueprint for AI/ML Purposes explains these ideas intimately. We’ve additionally launched Nexus Dashboard AI material templates to facilitate deployment in accordance with the blueprint and finest practices. On this weblog, we’ll clarify how Cisco Nexus 9000 Collection Switches use a dynamic load-balancing strategy to handle congestion.

Conventional and dynamic approaches to load balancing

Conventional load balancing makes use of equal-cost multipath (ECMP), a routing technique whereby as soon as a stream chooses a path, it usually persists during that stream. When a number of flows stick with the identical persistent path, it can lead to some hyperlinks being overused whereas others are underused, creating congestion on the over-utilized hyperlinks. In an AI coaching cluster, this will improve job completion instances and even result in larger tail latency, doubtlessly jeopardizing the efficiency of coaching jobs.

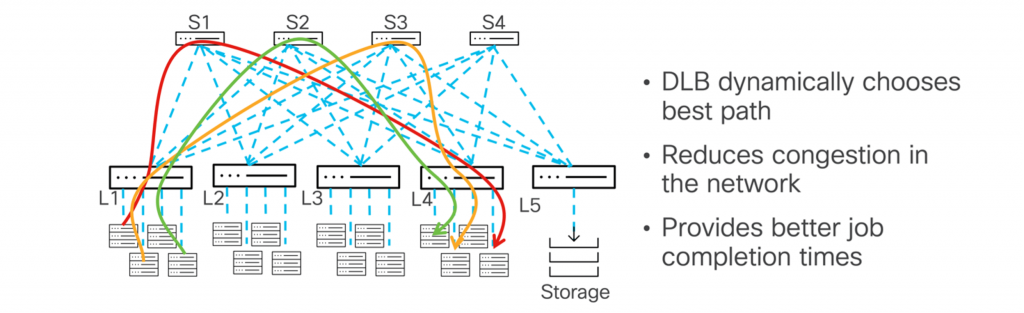

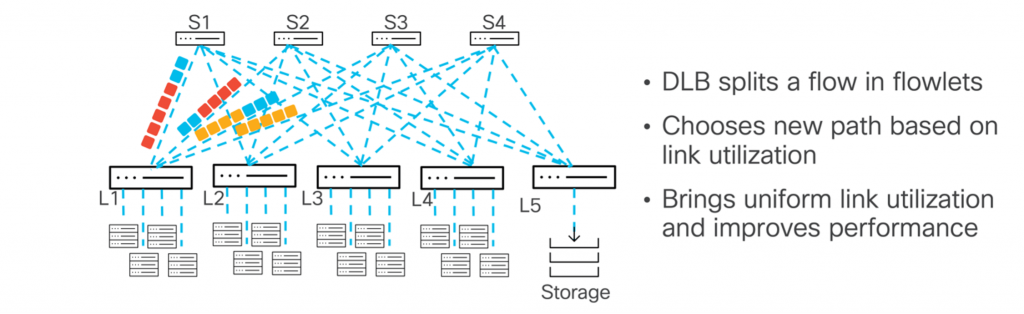

Because the community state is consistently altering, load balancing must be dynamic and pushed by real-time suggestions from community telemetry or consumer configurations. Dynamic load balancing (DLB) permits site visitors to be distributed extra effectively and dynamically by contemplating modifications within the community. Consequently, congestion may be averted and general efficiency improved. By constantly monitoring the community state, it will probably alter the trail for a stream—switching to less-utilized paths if one turns into overburdened.

The Nexus 9000 Collection makes use of hyperlink utilization as a parameter when deciding how one can make the most of multipath. Since hyperlink utilization is dynamic, rebalancing flows based mostly on path utilization permits for extra environment friendly forwarding and reduces congestion. When evaluating ECMP and DLB, perceive this key distinction: With ECMP, as soon as a quintuple stream is assigned to a specific path, it stays on that path, even when the hyperlink turns into congested or closely utilized. However, DLB begins by putting the quintuple stream on the least used hyperlink. If that hyperlink turns into extra utilized, DLB will dynamically shift the subsequent set of packets (generally known as a flowlet) to a distinct, much less congested hyperlink.

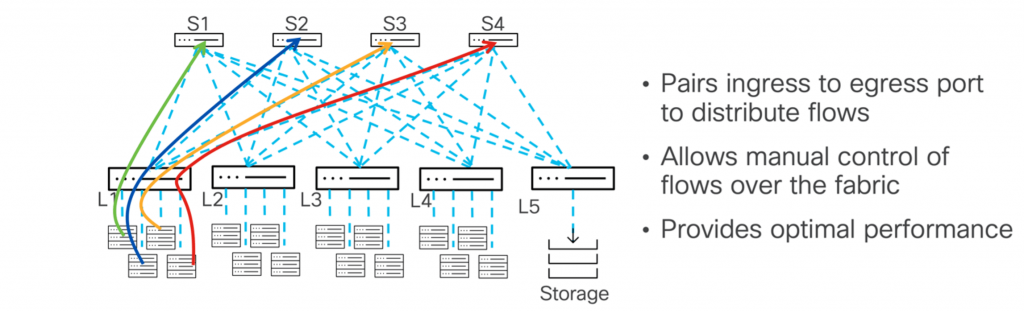

For many who wish to be in management, the Nexus 9000 Collection’ DLB allows you to fine-tune load balancing between enter and output ports. By manually configuring pairings between the enter and output ports, you may acquire larger flexibility and precision in managing site visitors. This lets you handle the load on output ports and scale back congestion. This strategy may be applied by way of command-line interface (CLI) or utility programming interface (API), facilitating large-scale networks and permitting guide site visitors distribution.

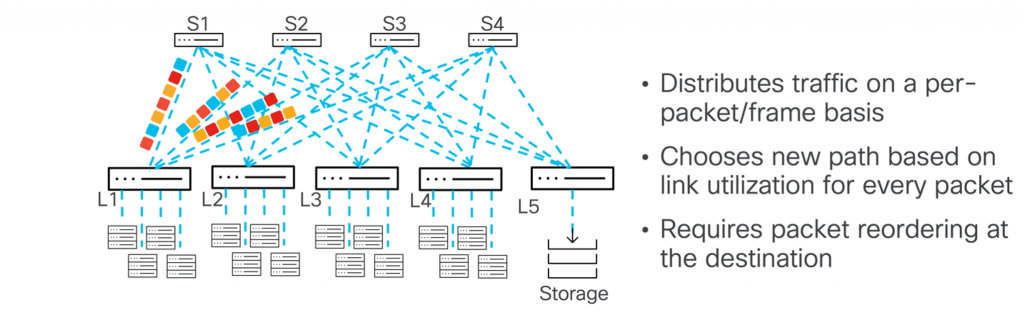

The Nexus 9000 Collection can spray packets throughout the material utilizing per-packet load balancing, sending every packet over a distinct path to optimize site visitors stream. This could present optimum hyperlink utilization as packets are distributed randomly. Nevertheless, it’s necessary to notice that packets could arrive out of order on the vacation spot host. The host should be able to reordering packets or should deal with them as they arrive, sustaining appropriate processing in reminiscence.

Efficiency enhancements on the way in which

Trying towards the longer term, new requirements will additional enhance efficiency. Members of the Extremely Ethernet Consortium, together with Cisco, have been working to develop requirements spanning many layers of the ISO/OSI stack to reinforce each AI and high-performance computing (HPC) workloads. Here’s what this might imply for Nexus 9000 Collection Switches and what could be anticipated.

Scalable transport, higher management

We’ve been centered on creating requirements for a extra scalable, versatile, safe, and built-in transport resolution—Extremely Ethernet Transport (UET). The UET protocol defines a brand new transport technique as connectionless, which means it doesn’t require a “handshake” (the time period for establishing a preliminary connection setup course of between communication units). Transport begins when a connection is established; the connection is then discarded as soon as the transport is full. This strategy permits for higher scalability and diminished latency and will even decrease the price of community interface playing cards (NICs).

Congestion management is constructed into the UET protocol, directing NICs to distribute site visitors throughout all out there paths within the material. Optionally, UET can use light-weight telemetry (round-trip time delay measurements) to gather data on community path utilization and congestion, delivering this information to the receiver. Packet trimming is one other elective characteristic that helps detect congestion early. It really works by sending solely the header data for packets that shall be dropped as a consequence of a full buffer. This supplies a transparent technique for the receiver to inform the sender about congestion, serving to scale back retransmission delays.

UET is an end-to-end transport the place endpoints (or NICs) take part equally with the community in transport. Connectionless transport originates and terminates on the sender and receiver. The community for this transport requires two site visitors courses: one for information site visitors and one for management site visitors, which is used to acknowledge that information site visitors is obtained. For information site visitors, express congestion notification (ECN) is used to sign congestion on the trail. Information site visitors can be transported over a lossless community, permitting versatile transport.

Prepared for UET adoption and extra

Nexus 9000 Collection Switches are UEC-ready, making it straightforward to undertake the brand new UET protocol shortly and seamlessly with each your present and new infrastructure. All of the necessary options are supported as we speak. The good-to-have elective options, akin to packet trimming, are supported in Cisco Silicon One-based Nexus merchandise. Further options shall be supported on Nexus 9000 Collection Switches sooner or later.

Construct your community for final reliability, exact management, and peak efficiency with the Nexus 9000 Collection. You possibly can start as we speak by enabling dynamic load balancing for AI workloads. Then, as soon as the UEC requirements are ratified, we’ll be prepared that can assist you improve to Extremely Ethernet NICs, unlocking the complete potential of Extremely Ethernet and optimizing your material to future-proof your infrastructure. Able to optimize your future? Begin constructing it with the Nexus 9000 Collection.

Share: